If our need is to download images to later filter them quickly and we don't want to spend several hours browsing Looking for which image meets the requirements we are looking for, SiteSucker is a much simpler and faster solution than downloading one by one all the images that the browser shows us. SiteSucker allows us to download entire web pages, including both the images shown and the files and pages of the same website to which they are linked. This includes comments, searches, and other organization features like categories and tags that are native to WordPress. SiteSucker offers us that and more.īut also, it allows us to work with all that content wherever we are without needing an internet connection. If our work, project or study requires that download images or documents in large numbersWe should consider making use of an application that allows us to download web pages with all the content it offers, including links to be able to manipulate the content whenever and wherever we want. Something like SiteSucker makes a lot more sense than cloning a site for helping folks archive their work so that it can be accessible for the long term, and building that feature into Reclaim Hosting’s services would be pretty cool. Depending on the type of information we need and for what, it is likely that we will have to download content. All those database driven sites need to be updated, maintained, and protected from hackers and spam. HTTrack arranges the original sites relative link-structure. It allows you to download a World Wide Web site from the Internet to a local directory, building recursively all directories, getting HTML, images, and other files from the server to your computer. You can also contact us and we can make a copy for you. HTTrack is a free (GPL, libre/free software) and easy-to-use offline browser utility. You can do this by making a 'static copy' of the site using tools such as HTTrack or SiteSucker. Also the one that we did not think to look for for different reasons that are not relevant. At some point, you may wish to move your site off of Dartmouth WordPress and import it to a different platform (or another WordPress site). Plus, if you do update the code, once it's up and running again you can check the results of the calculations against the saved PDF files to ensure that you haven't inadvertently messed up the code while fixing it.The Internet offers us access to practically anything that comes to mind. If you do not have (S)FTP access to the old site, you can use wget or an. Having a static snapshot of the results of the JavaScript calculations is useful in case the code breaks in the future and you don't have time to fix it. The files you are importing must be on the same server as your WordPress installation. Of course, I could probably update my JavaScript to accommodate future changes, but this requires constant vigilance, and there's a risk of introducing bugs along the way. To get the results, you have to actually run the code, and it's not obvious to me that web browsers in, say, 20 years will be backward-compatible enough to run JavaScript that I might write today.

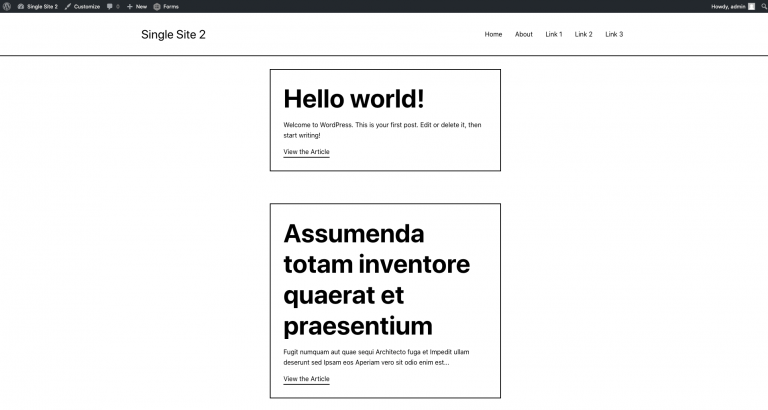

However, nontrivial JavaScript calculations are harder to understand just by looking at them. We ran SiteSucker on all sites within their network, then relaunched on. Even if browsers 50 years into the future can't render present-day HTML files, a human with some knowledge of historical HTML tags could still understand 99%, if not 100%, of the HTML just by looking at it in a text editor. Migrating Drupal and a Custom CMS to a WordPress Multi-site Network for a Senior.

A regular HTML document is human-readable.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed